Note

Click here to download the full example code

Merge moves with HDP-HMM¶

How to try merge moves efficiently for time-series datasets.

This example reviews three possible ways to plan and execute merge proposals.

try merging all pairs of clusters

pick fewer merge pairs (at most 5 per cluster) in a size-biased way

pick fewer merge pairs (at most 5 per cluster) in objective-driven way

# sphinx_gallery_thumbnail_number = 2

import bnpy

import numpy as np

import os

from matplotlib import pylab

import seaborn as sns

FIG_SIZE = (10, 5)

pylab.rcParams['figure.figsize'] = FIG_SIZE

Setup: Load data¶

# Read bnpy's built-in "Mocap6" dataset from file.

dataset_path = os.path.join(bnpy.DATASET_PATH, 'mocap6')

dataset = bnpy.data.GroupXData.read_npz(

os.path.join(dataset_path, 'dataset.npz'))

Setup: Initialization hyperparameters¶

init_kwargs = dict(

K=20,

initname='randexamples',

)

alg_kwargs = dict(

nLap=29,

nTask=1, nBatch=1, convergeThr=0.0001,

)

Setup: HDP-HMM hyperparameters¶

hdphmm_kwargs = dict(

gamma = 5.0, # top-level Dirichlet concentration parameter

transAlpha = 0.5, # trans-level Dirichlet concentration parameter

)

Setup: Gaussian observation model hyperparameters¶

gauss_kwargs = dict(

sF = 1.0, # Set prior so E[covariance] = identity

ECovMat = 'eye',

)

All-Pairs : Try all possible pairs of merges every 10 laps¶

This is expensive, but a good exhaustive test.

allpairs_merge_kwargs = dict(

m_startLap = 10,

# Set limits to number of merges attempted each lap.

# This value specifies max number of tries for each cluster

# Setting this very high (to 50) effectively means try all pairs

m_maxNumPairsContainingComp = 50,

# Set "reactivation" limits

# So that each cluster is eligible again after 10 passes thru dataset

# Or when it's size changes by 400%

m_nLapToReactivate = 10,

m_minPercChangeInNumAtomsToReactivate = 400 * 0.01,

# Specify how to rank pairs (determines order in which merges are tried)

# 'total_size' and 'descending' means try largest combined clusters first

m_pair_ranking_procedure = 'total_size',

m_pair_ranking_direction = 'descending',

)

allpairs_trained_model, allpairs_info_dict = bnpy.run(

dataset, 'HDPHMM', 'DiagGauss', 'memoVB',

output_path='/tmp/mocap6/trymerge-K=20-model=HDPHMM+DiagGauss-ECovMat=1*eye-merge_strategy=all_pairs/',

moves='merge,shuffle',

**dict(

sum(map(list, [alg_kwargs.items(),

init_kwargs.items(),

hdphmm_kwargs.items(),

gauss_kwargs.items(),

allpairs_merge_kwargs.items()]),[]))

)

Dataset Summary:

GroupXData

total size: 6 units

batch size: 6 units

num. batches: 1

Allocation Model: None

Obs. Data Model: Gaussian with diagonal covariance.

Obs. Data Prior: independent Gauss-Wishart prior on each dimension

Wishart params

nu = 14 ...

beta = [ 12 12] ...

Expectations

E[ mean[k]] =

[ 0 0] ...

E[ covar[k]] =

[[1. 0.]

[0. 1.]] ...

Initialization:

initname = randexamples

K = 20 (number of clusters)

seed = 1607680

elapsed_time: 0.0 sec

Learn Alg: memoVB | task 1/1 | alg. seed: 1607680 | data order seed: 8541952

task_output_path: /tmp/mocap6/trymerge-K=20-model=HDPHMM+DiagGauss-ECovMat=1*eye-merge_strategy=all_pairs/1

MERGE @ lap 1.00: Disabled. Cannot plan merge on first lap. Need valid SS that represent whole dataset.

1.000/29 after 0 sec. | 229.7 MiB | K 20 | loss 3.716756017e+00 |

MERGE @ lap 2.00: Disabled. Waiting for lap >= 10 (--m_startLap).

2.000/29 after 0 sec. | 229.7 MiB | K 20 | loss 3.611824567e+00 | Ndiff 30.418

MERGE @ lap 3.00: Disabled. Waiting for lap >= 10 (--m_startLap).

3.000/29 after 0 sec. | 229.7 MiB | K 20 | loss 3.579928854e+00 | Ndiff 18.080

MERGE @ lap 4.00: Disabled. Waiting for lap >= 10 (--m_startLap).

4.000/29 after 1 sec. | 229.7 MiB | K 20 | loss 3.565367190e+00 | Ndiff 19.077

MERGE @ lap 5.00: Disabled. Waiting for lap >= 10 (--m_startLap).

5.000/29 after 1 sec. | 229.7 MiB | K 20 | loss 3.552412231e+00 | Ndiff 18.396

MERGE @ lap 6.00: Disabled. Waiting for lap >= 10 (--m_startLap).

6.000/29 after 1 sec. | 229.7 MiB | K 20 | loss 3.547245682e+00 | Ndiff 9.353

MERGE @ lap 7.00: Disabled. Waiting for lap >= 10 (--m_startLap).

7.000/29 after 1 sec. | 229.7 MiB | K 20 | loss 3.545361199e+00 | Ndiff 3.043

MERGE @ lap 8.00: Disabled. Waiting for lap >= 10 (--m_startLap).

8.000/29 after 1 sec. | 229.7 MiB | K 20 | loss 3.542436507e+00 | Ndiff 8.684

MERGE @ lap 9.00: Disabled. Waiting for lap >= 10 (--m_startLap).

9.000/29 after 1 sec. | 229.7 MiB | K 20 | loss 3.533845903e+00 | Ndiff 8.233

MERGE @ lap 10.00 : 5/136 accepted. Ndiff 213.29. 54 skipped.

10.000/29 after 20 sec. | 229.7 MiB | K 15 | loss 3.520225074e+00 | Ndiff 8.233

MERGE @ lap 11.00 : 0/34 accepted. Ndiff 0.00. 0 skipped.

11.000/29 after 25 sec. | 229.7 MiB | K 15 | loss 3.512417678e+00 | Ndiff 9.249

MERGE @ lap 12.00: No promising candidates, so no attempts.

12.000/29 after 25 sec. | 229.7 MiB | K 15 | loss 3.510801853e+00 | Ndiff 9.939

MERGE @ lap 13.00: No promising candidates, so no attempts.

13.000/29 after 25 sec. | 229.7 MiB | K 15 | loss 3.510128661e+00 | Ndiff 12.145

MERGE @ lap 14.00: No promising candidates, so no attempts.

14.000/29 after 25 sec. | 229.7 MiB | K 15 | loss 3.509861771e+00 | Ndiff 6.725

MERGE @ lap 15.00: No promising candidates, so no attempts.

15.000/29 after 25 sec. | 229.7 MiB | K 15 | loss 3.509386291e+00 | Ndiff 3.376

MERGE @ lap 16.00: No promising candidates, so no attempts.

16.000/29 after 25 sec. | 229.7 MiB | K 15 | loss 3.509363787e+00 | Ndiff 1.866

MERGE @ lap 17.00: No promising candidates, so no attempts.

17.000/29 after 25 sec. | 229.7 MiB | K 15 | loss 3.509368704e+00 | Ndiff 1.466

MERGE @ lap 18.00: No promising candidates, so no attempts.

18.000/29 after 26 sec. | 229.7 MiB | K 15 | loss 3.509322290e+00 | Ndiff 1.627

MERGE @ lap 19.00: No promising candidates, so no attempts.

19.000/29 after 26 sec. | 229.7 MiB | K 15 | loss 3.509288542e+00 | Ndiff 0.863

MERGE @ lap 20.00 : 0/71 accepted. Ndiff 0.00. 0 skipped.

20.000/29 after 34 sec. | 229.7 MiB | K 15 | loss 3.509285892e+00 | Ndiff 0.429

MERGE @ lap 21.00 : 0/34 accepted. Ndiff 0.00. 0 skipped.

21.000/29 after 38 sec. | 229.7 MiB | K 15 | loss 3.509285238e+00 | Ndiff 0.273

MERGE @ lap 22.00: No promising candidates, so no attempts.

22.000/29 after 39 sec. | 229.7 MiB | K 15 | loss 3.509285001e+00 | Ndiff 0.176

MERGE @ lap 23.00: No promising candidates, so no attempts.

23.000/29 after 39 sec. | 229.7 MiB | K 15 | loss 3.509284909e+00 | Ndiff 0.114

MERGE @ lap 24.00: No promising candidates, so no attempts.

24.000/29 after 39 sec. | 229.7 MiB | K 15 | loss 3.509284870e+00 | Ndiff 0.075

MERGE @ lap 25.00: No promising candidates, so no attempts.

25.000/29 after 39 sec. | 229.7 MiB | K 15 | loss 3.509284852e+00 | Ndiff 0.054

MERGE @ lap 26.00: No promising candidates, so no attempts.

26.000/29 after 39 sec. | 229.7 MiB | K 15 | loss 3.509284844e+00 | Ndiff 0.039

MERGE @ lap 27.00: No promising candidates, so no attempts.

27.000/29 after 39 sec. | 229.7 MiB | K 15 | loss 3.509284840e+00 | Ndiff 0.028

MERGE @ lap 28.00: No promising candidates, so no attempts.

28.000/29 after 39 sec. | 229.7 MiB | K 15 | loss 3.509284838e+00 | Ndiff 0.020

MERGE @ lap 29.00: No promising candidates, so no attempts.

29.000/29 after 39 sec. | 229.7 MiB | K 15 | loss 3.509284837e+00 | Ndiff 0.014

... done. not converged. max laps thru data exceeded.

Large-Pairs : Try 5-largest-size pairs of merges every 10 laps¶

This is much cheaper than all pairs. Let’s see how well it does.

largepairs_merge_kwargs = dict(

m_startLap = 10,

# Set limits to number of merges attempted each lap.

# This value specifies max number of tries for each cluster

m_maxNumPairsContainingComp = 5,

# Set "reactivation" limits

# So that each cluster is eligible again after 10 passes thru dataset

# Or when it's size changes by 400%

m_nLapToReactivate = 10,

m_minPercChangeInNumAtomsToReactivate = 400 * 0.01,

# Specify how to rank pairs (determines order in which merges are tried)

# 'total_size' and 'descending' means try largest size clusters first

m_pair_ranking_procedure = 'total_size',

m_pair_ranking_direction = 'descending',

)

largepairs_trained_model, largepairs_info_dict = bnpy.run(

dataset, 'HDPHMM', 'DiagGauss', 'memoVB',

output_path='/tmp/mocap6/trymerge-K=20-model=HDPHMM+DiagGauss-ECovMat=1*eye-merge_strategy=large_pairs/',

moves='merge,shuffle',

**dict(

sum(map(list, [alg_kwargs.items(),

init_kwargs.items(),

hdphmm_kwargs.items(),

gauss_kwargs.items(),

largepairs_merge_kwargs.items()]),[])))

Dataset Summary:

GroupXData

total size: 6 units

batch size: 6 units

num. batches: 1

Allocation Model: None

Obs. Data Model: Gaussian with diagonal covariance.

Obs. Data Prior: independent Gauss-Wishart prior on each dimension

Wishart params

nu = 14 ...

beta = [ 12 12] ...

Expectations

E[ mean[k]] =

[ 0 0] ...

E[ covar[k]] =

[[1. 0.]

[0. 1.]] ...

Initialization:

initname = randexamples

K = 20 (number of clusters)

seed = 1607680

elapsed_time: 0.0 sec

Learn Alg: memoVB | task 1/1 | alg. seed: 1607680 | data order seed: 8541952

task_output_path: /tmp/mocap6/trymerge-K=20-model=HDPHMM+DiagGauss-ECovMat=1*eye-merge_strategy=large_pairs/1

MERGE @ lap 1.00: Disabled. Cannot plan merge on first lap. Need valid SS that represent whole dataset.

1.000/29 after 0 sec. | 229.7 MiB | K 20 | loss 3.716756017e+00 |

MERGE @ lap 2.00: Disabled. Waiting for lap >= 10 (--m_startLap).

2.000/29 after 0 sec. | 229.7 MiB | K 20 | loss 3.611824567e+00 | Ndiff 30.418

MERGE @ lap 3.00: Disabled. Waiting for lap >= 10 (--m_startLap).

3.000/29 after 0 sec. | 229.7 MiB | K 20 | loss 3.579928854e+00 | Ndiff 18.080

MERGE @ lap 4.00: Disabled. Waiting for lap >= 10 (--m_startLap).

4.000/29 after 1 sec. | 229.7 MiB | K 20 | loss 3.565367190e+00 | Ndiff 19.077

MERGE @ lap 5.00: Disabled. Waiting for lap >= 10 (--m_startLap).

5.000/29 after 1 sec. | 229.7 MiB | K 20 | loss 3.552412231e+00 | Ndiff 18.396

MERGE @ lap 6.00: Disabled. Waiting for lap >= 10 (--m_startLap).

6.000/29 after 1 sec. | 229.7 MiB | K 20 | loss 3.547245682e+00 | Ndiff 9.353

MERGE @ lap 7.00: Disabled. Waiting for lap >= 10 (--m_startLap).

7.000/29 after 1 sec. | 229.7 MiB | K 20 | loss 3.545361199e+00 | Ndiff 3.043

MERGE @ lap 8.00: Disabled. Waiting for lap >= 10 (--m_startLap).

8.000/29 after 1 sec. | 229.7 MiB | K 20 | loss 3.542436507e+00 | Ndiff 8.684

MERGE @ lap 9.00: Disabled. Waiting for lap >= 10 (--m_startLap).

9.000/29 after 1 sec. | 229.7 MiB | K 20 | loss 3.533845903e+00 | Ndiff 8.233

MERGE @ lap 10.00 : 1/42 accepted. Ndiff 41.88. 4 skipped.

10.000/29 after 7 sec. | 229.7 MiB | K 19 | loss 3.528709925e+00 | Ndiff 8.233

MERGE @ lap 11.00 : 1/43 accepted. Ndiff 5.72. 3 skipped.

11.000/29 after 13 sec. | 229.7 MiB | K 18 | loss 3.524443059e+00 | Ndiff 8.233

MERGE @ lap 12.00 : 1/40 accepted. Ndiff 63.51. 2 skipped.

12.000/29 after 19 sec. | 229.7 MiB | K 17 | loss 3.514142988e+00 | Ndiff 8.233

MERGE @ lap 13.00 : 0/25 accepted. Ndiff 0.00. 0 skipped.

13.000/29 after 22 sec. | 229.7 MiB | K 17 | loss 3.510973714e+00 | Ndiff 11.426

MERGE @ lap 14.00 : 1/4 accepted. Ndiff 26.72. 1 skipped.

14.000/29 after 23 sec. | 229.7 MiB | K 16 | loss 3.506988279e+00 | Ndiff 11.426

MERGE @ lap 15.00: No promising candidates, so no attempts.

15.000/29 after 23 sec. | 229.7 MiB | K 16 | loss 3.505872299e+00 | Ndiff 5.842

MERGE @ lap 16.00: No promising candidates, so no attempts.

16.000/29 after 23 sec. | 229.7 MiB | K 16 | loss 3.505589201e+00 | Ndiff 2.350

MERGE @ lap 17.00: No promising candidates, so no attempts.

17.000/29 after 23 sec. | 229.7 MiB | K 16 | loss 3.505217921e+00 | Ndiff 3.323

MERGE @ lap 18.00: No promising candidates, so no attempts.

18.000/29 after 23 sec. | 229.7 MiB | K 16 | loss 3.505132466e+00 | Ndiff 2.900

MERGE @ lap 19.00: No promising candidates, so no attempts.

19.000/29 after 23 sec. | 229.7 MiB | K 16 | loss 3.505086590e+00 | Ndiff 1.972

MERGE @ lap 20.00 : 0/34 accepted. Ndiff 0.00. 0 skipped.

20.000/29 after 28 sec. | 229.7 MiB | K 16 | loss 3.504352544e+00 | Ndiff 12.591

MERGE @ lap 21.00 : 0/33 accepted. Ndiff 0.00. 0 skipped.

21.000/29 after 32 sec. | 229.7 MiB | K 16 | loss 3.502844332e+00 | Ndiff 4.034

MERGE @ lap 22.00 : 0/31 accepted. Ndiff 0.00. 0 skipped.

22.000/29 after 36 sec. | 229.7 MiB | K 16 | loss 3.502177856e+00 | Ndiff 8.703

MERGE @ lap 23.00 : 0/20 accepted. Ndiff 0.00. 0 skipped.

23.000/29 after 38 sec. | 229.7 MiB | K 16 | loss 3.501968229e+00 | Ndiff 2.968

MERGE @ lap 24.00 : 0/2 accepted. Ndiff 0.00. 0 skipped.

24.000/29 after 39 sec. | 229.7 MiB | K 16 | loss 3.501918723e+00 | Ndiff 1.676

MERGE @ lap 25.00: No promising candidates, so no attempts.

25.000/29 after 39 sec. | 229.7 MiB | K 16 | loss 3.501881600e+00 | Ndiff 1.590

MERGE @ lap 26.00: No promising candidates, so no attempts.

26.000/29 after 39 sec. | 229.7 MiB | K 16 | loss 3.501870374e+00 | Ndiff 1.171

MERGE @ lap 27.00: No promising candidates, so no attempts.

27.000/29 after 39 sec. | 229.7 MiB | K 16 | loss 3.501865781e+00 | Ndiff 0.869

MERGE @ lap 28.00: No promising candidates, so no attempts.

28.000/29 after 39 sec. | 229.7 MiB | K 16 | loss 3.501867113e+00 | Ndiff 0.638

MERGE @ lap 29.00: No promising candidates, so no attempts.

29.000/29 after 40 sec. | 229.7 MiB | K 16 | loss 3.501863089e+00 | Ndiff 0.468

... done. not converged. max laps thru data exceeded.

Good-ELBO-Pairs : Rank pairs of merges by improvement to observation model¶

This is much cheaper than all pairs and perhaps more principled. Let’s see how well it does.

goodelbopairs_merge_kwargs = dict(

m_startLap = 10,

# Set limits to number of merges attempted each lap.

# This value specifies max number of tries for each cluster

m_maxNumPairsContainingComp = 5,

# Set "reactivation" limits

# So that each cluster is eligible again after 10 passes thru dataset

# Or when it's size changes by 400%

m_nLapToReactivate = 10,

m_minPercChangeInNumAtomsToReactivate = 400 * 0.01,

# Specify how to rank pairs (determines order in which merges are tried)

# 'obsmodel_elbo' means rank pairs by improvement to observation model ELBO

m_pair_ranking_procedure = 'obsmodel_elbo',

m_pair_ranking_direction = 'descending',

)

goodelbopairs_trained_model, goodelbopairs_info_dict = bnpy.run(

dataset, 'HDPHMM', 'DiagGauss', 'memoVB',

output_path='/tmp/mocap6/trymerge-K=20-model=HDPHMM+DiagGauss-ECovMat=1*eye-merge_strategy=good_elbo_pairs/',

moves='merge,shuffle',

**dict(

sum(map(list, [alg_kwargs.items(),

init_kwargs.items(),

hdphmm_kwargs.items(),

gauss_kwargs.items(),

goodelbopairs_merge_kwargs.items()]),[])))

Dataset Summary:

GroupXData

total size: 6 units

batch size: 6 units

num. batches: 1

Allocation Model: None

Obs. Data Model: Gaussian with diagonal covariance.

Obs. Data Prior: independent Gauss-Wishart prior on each dimension

Wishart params

nu = 14 ...

beta = [ 12 12] ...

Expectations

E[ mean[k]] =

[ 0 0] ...

E[ covar[k]] =

[[1. 0.]

[0. 1.]] ...

Initialization:

initname = randexamples

K = 20 (number of clusters)

seed = 1607680

elapsed_time: 0.0 sec

Learn Alg: memoVB | task 1/1 | alg. seed: 1607680 | data order seed: 8541952

task_output_path: /tmp/mocap6/trymerge-K=20-model=HDPHMM+DiagGauss-ECovMat=1*eye-merge_strategy=good_elbo_pairs/1

MERGE @ lap 1.00: Disabled. Cannot plan merge on first lap. Need valid SS that represent whole dataset.

1.000/29 after 0 sec. | 229.7 MiB | K 20 | loss 3.716756017e+00 |

MERGE @ lap 2.00: Disabled. Waiting for lap >= 10 (--m_startLap).

2.000/29 after 0 sec. | 229.7 MiB | K 20 | loss 3.611824567e+00 | Ndiff 30.418

MERGE @ lap 3.00: Disabled. Waiting for lap >= 10 (--m_startLap).

3.000/29 after 0 sec. | 229.7 MiB | K 20 | loss 3.579928854e+00 | Ndiff 18.080

MERGE @ lap 4.00: Disabled. Waiting for lap >= 10 (--m_startLap).

4.000/29 after 1 sec. | 229.7 MiB | K 20 | loss 3.565367190e+00 | Ndiff 19.077

MERGE @ lap 5.00: Disabled. Waiting for lap >= 10 (--m_startLap).

5.000/29 after 1 sec. | 229.7 MiB | K 20 | loss 3.552412231e+00 | Ndiff 18.396

MERGE @ lap 6.00: Disabled. Waiting for lap >= 10 (--m_startLap).

6.000/29 after 1 sec. | 229.7 MiB | K 20 | loss 3.547245682e+00 | Ndiff 9.353

MERGE @ lap 7.00: Disabled. Waiting for lap >= 10 (--m_startLap).

7.000/29 after 1 sec. | 229.7 MiB | K 20 | loss 3.545361199e+00 | Ndiff 3.043

MERGE @ lap 8.00: Disabled. Waiting for lap >= 10 (--m_startLap).

8.000/29 after 1 sec. | 229.7 MiB | K 20 | loss 3.542436507e+00 | Ndiff 8.684

MERGE @ lap 9.00: Disabled. Waiting for lap >= 10 (--m_startLap).

9.000/29 after 1 sec. | 229.7 MiB | K 20 | loss 3.533845903e+00 | Ndiff 8.233

MERGE @ lap 10.00 : 4/24 accepted. Ndiff 186.96. 24 skipped.

10.000/29 after 5 sec. | 229.7 MiB | K 16 | loss 3.519121780e+00 | Ndiff 8.233

MERGE @ lap 11.00 : 1/27 accepted. Ndiff 26.46. 8 skipped.

11.000/29 after 8 sec. | 229.7 MiB | K 15 | loss 3.512205787e+00 | Ndiff 8.233

MERGE @ lap 12.00 : 0/35 accepted. Ndiff 0.00. 0 skipped.

12.000/29 after 13 sec. | 229.7 MiB | K 15 | loss 3.510583397e+00 | Ndiff 7.969

MERGE @ lap 13.00 : 0/20 accepted. Ndiff 0.00. 0 skipped.

13.000/29 after 15 sec. | 229.7 MiB | K 15 | loss 3.510164786e+00 | Ndiff 9.666

MERGE @ lap 14.00 : 0/6 accepted. Ndiff 0.00. 0 skipped.

14.000/29 after 16 sec. | 229.7 MiB | K 15 | loss 3.509795232e+00 | Ndiff 8.294

MERGE @ lap 15.00: No promising candidates, so no attempts.

15.000/29 after 16 sec. | 229.7 MiB | K 15 | loss 3.509320540e+00 | Ndiff 4.196

MERGE @ lap 16.00: No promising candidates, so no attempts.

16.000/29 after 16 sec. | 229.7 MiB | K 15 | loss 3.509297032e+00 | Ndiff 2.222

MERGE @ lap 17.00: No promising candidates, so no attempts.

17.000/29 after 16 sec. | 229.7 MiB | K 15 | loss 3.509289811e+00 | Ndiff 1.343

MERGE @ lap 18.00: No promising candidates, so no attempts.

18.000/29 after 17 sec. | 229.7 MiB | K 15 | loss 3.509286966e+00 | Ndiff 0.846

MERGE @ lap 19.00: No promising candidates, so no attempts.

19.000/29 after 17 sec. | 229.7 MiB | K 15 | loss 3.509285777e+00 | Ndiff 0.548

MERGE @ lap 20.00 : 0/18 accepted. Ndiff 0.00. 0 skipped.

20.000/29 after 19 sec. | 229.7 MiB | K 15 | loss 3.509285264e+00 | Ndiff 0.361

MERGE @ lap 21.00 : 0/26 accepted. Ndiff 0.00. 0 skipped.

21.000/29 after 22 sec. | 229.7 MiB | K 15 | loss 3.509285036e+00 | Ndiff 0.241

MERGE @ lap 22.00 : 0/35 accepted. Ndiff 0.00. 0 skipped.

22.000/29 after 27 sec. | 229.7 MiB | K 15 | loss 3.509284932e+00 | Ndiff 0.163

MERGE @ lap 23.00 : 0/20 accepted. Ndiff 0.00. 0 skipped.

23.000/29 after 29 sec. | 229.7 MiB | K 15 | loss 3.509284883e+00 | Ndiff 0.111

MERGE @ lap 24.00 : 0/6 accepted. Ndiff 0.00. 0 skipped.

24.000/29 after 30 sec. | 229.7 MiB | K 15 | loss 3.509284860e+00 | Ndiff 0.077

MERGE @ lap 25.00: No promising candidates, so no attempts.

25.000/29 after 30 sec. | 229.7 MiB | K 15 | loss 3.509284848e+00 | Ndiff 0.053

MERGE @ lap 26.00: No promising candidates, so no attempts.

26.000/29 after 30 sec. | 229.7 MiB | K 15 | loss 3.509284842e+00 | Ndiff 0.037

MERGE @ lap 27.00: No promising candidates, so no attempts.

27.000/29 after 30 sec. | 229.7 MiB | K 15 | loss 3.509284839e+00 | Ndiff 0.026

MERGE @ lap 28.00: No promising candidates, so no attempts.

28.000/29 after 31 sec. | 229.7 MiB | K 15 | loss 3.509284837e+00 | Ndiff 0.019

MERGE @ lap 29.00: No promising candidates, so no attempts.

29.000/29 after 31 sec. | 229.7 MiB | K 15 | loss 3.509284836e+00 | Ndiff 0.013

... done. not converged. max laps thru data exceeded.

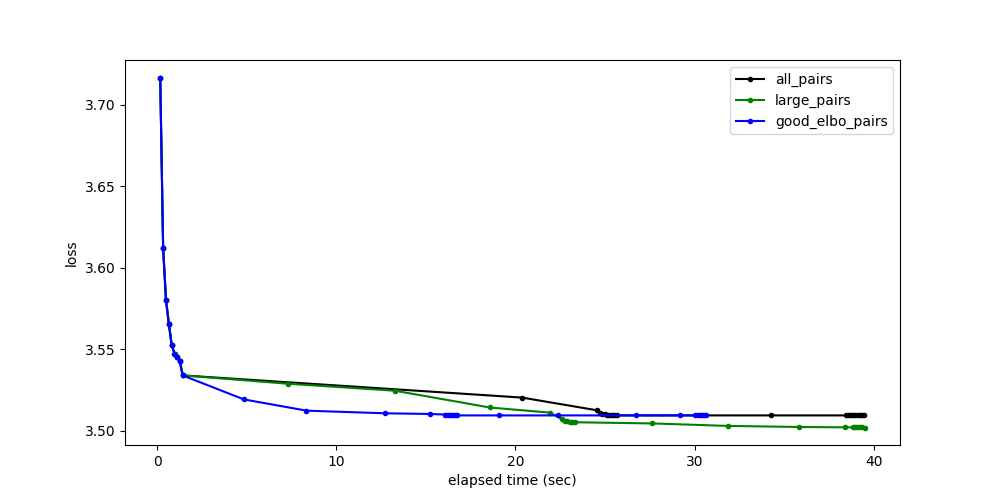

Compare loss function vs wallclock time¶

pylab.figure()

for info_dict, color_str, label_str in [

(allpairs_info_dict, 'k', 'all_pairs'),

(largepairs_info_dict, 'g', 'large_pairs'),

(goodelbopairs_info_dict, 'b', 'good_elbo_pairs')]:

pylab.plot(

info_dict['elapsed_time_sec_history'],

info_dict['loss_history'],

'.-',

color=color_str,

label=label_str)

pylab.legend(loc='upper right')

pylab.xlabel('elapsed time (sec)')

pylab.ylabel('loss')

Text(78.97222222222221, 0.5, 'loss')

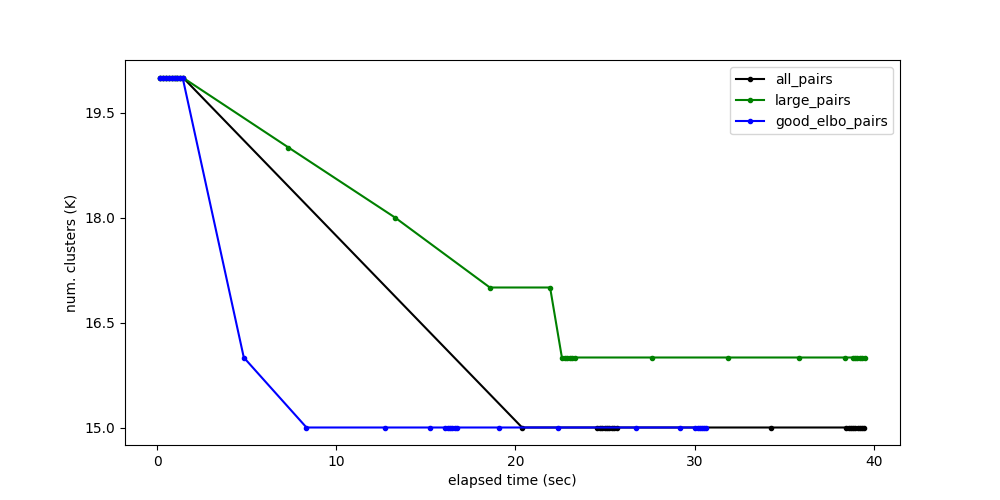

Compare number of active clusters vs wallclock time¶

pylab.figure()

for info_dict, color_str, label_str in [

(allpairs_info_dict, 'k', 'all_pairs'),

(largepairs_info_dict, 'g', 'large_pairs'),

(goodelbopairs_info_dict, 'b', 'good_elbo_pairs')]:

pylab.plot(

info_dict['elapsed_time_sec_history'],

info_dict['K_history'],

'.-',

color=color_str,

label=label_str)

pylab.legend(loc='upper right')

pylab.xlabel('elapsed time (sec)')

pylab.ylabel('num. clusters (K)')

pylab.show(block=False)

Total running time of the script: ( 1 minutes 49.904 seconds)